Implementing local agentic AI: a practical guide

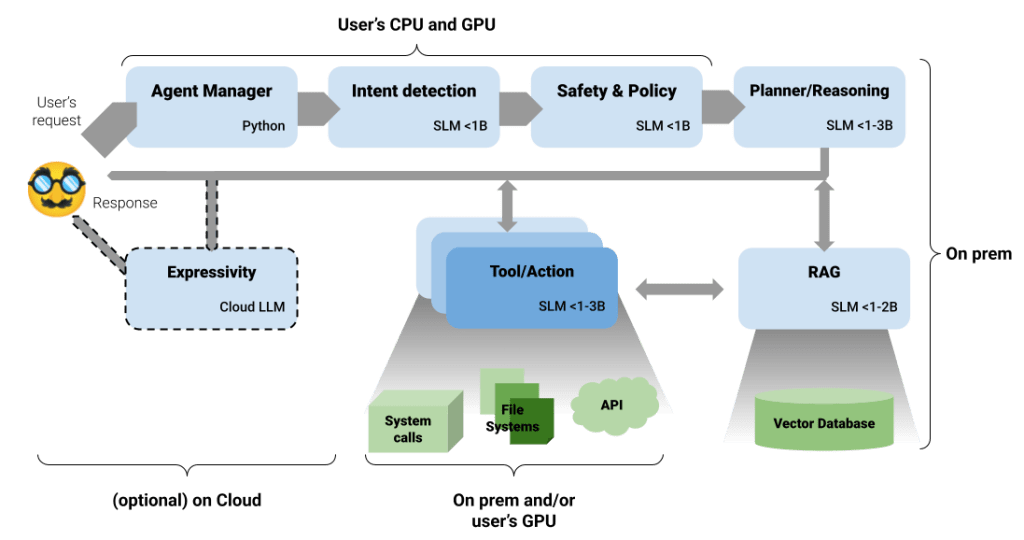

In our previous article, “Small Language Models: Why the future of AI agents might be tiny,” we described the idea of Small Language Models (SLM), specialized language models with a small footprint to run at the edge of the Internet (e.g., your laptop, tiny server farms, etc.). In the following article, “How to Build Agentic AI When Your Data Can’t Leave the Network,” we established the architectural need for specialized SLMs when building privacy-focused agentic systems. We argued that for organizations (or use cases) with strict data locality and privacy constraints, the model should run locally by default, scaling to the cloud only for non-critical tasks, such as stylistic rewriting of text. This architecture, informed by research such as ThinkSLM: Towards Reasoning in Small Language Models (EMNLP 2025), separates concerns: reasoning, retrieval, and expression are handled by specialized, verifiable, and cost-effective local models.

However, theory must meet practice. An architecture is only as useful as its implementation. This follow-up shifts the focus from why to how, reviewing the concrete steps needed to bring the “Local First, Cloud Last” design to life. We cover the environment setup, a practical enterprise use case, and a simple testing approach to show that privacy-focused agents are not just a theoretical ideal, but a deployable model. In this sense, this article constitutes the last piece of the SLM trilogy.

🚀 Subscribe to The Replay newsletter

The replay is a weekly newsletter for development and engineering managers.

Delivered once a week, this is your curated guide to the most important conversations around frontend development, emerging AI tools, and the state of modern software.

Business use case: HR triage system

Smaller language models (SLMs) can run easily with a lighter on-premises setup, sometimes even on a standard laptop without a dedicated GPU, as demonstrated in the example shown here.

SLMs are particularly effective in scenarios where a cloud-based architecture is not feasible, such as when strict privacy compliance is required. To illustrate this, we have developed a simple human resources (HR) sorting system. This system is designed to manage sensitive employee reports and uses a multi-model pipeline to classify reports, generate a remediation plan, and execute necessary actions.

The system processes a brief incoming HR report, identifying the nature of the issue described (e.g. harassment, burnout, performance, etc.). For example, the repository code uses the following sample text:

My Employee ID is 12345: I have been working excessive hours for months.

My manager threatens retaliation if I raise concerns,

and I am feeling mentally exhausted and unsafe.

The first model understands that this report describes a case of burnout. The next model is the planner, who decides what steps in the plan to respond to this report. A possible output is:

[

{

"stepdescription": "Create a formal HR case documenting the employee's excessive working hours, perceived threats of retaliation, and resulting mental exhaustion",

"employeeid": "12345",

"category": "burnout",

"severity": "HIGH"

},

{

"actiontype": "Arranging a confidential meeting with HR",

"employeeid": "12345",

"priority_level": "MEDIUM"

}

]

The steps of the plan are described at a high level. The final model is responsible for mapping this general description to a specific executable function. It selects the most appropriate function based on the step description and then executes it. Although the implementation in our current code is a simple Python function that prints arguments, this step can be generalized to an API call, a transfer to another specialized model, or a similar action in a real-world scenario. Following the execution of the “real” functions:

[STEP]

{'stepdescription': "Create a formal HR case documenting the employee's excessive working hours, perceived threats of retaliation, and resulting mental exhaustion", 'employeeid': '12345', 'category': 'burnout', 'severity': 'HIGH'}

[EXECUTE] openhrcase with arguments {'category': 'burnout', 'employeeid': '12345', 'risklevel': 'HIGH'}

[ACTION] Open HR case for employee=12345, category=burnout, risk=HIGH

[STEP]

{'actiontype': 'Arranging a confidential meeting with HR', 'employeeid': '12345', 'prioritylevel': 'MEDIUM'}

[EXECUTE] schedulehrmeeting with arguments {'employeeid': '12345', 'urgency': 'MEDIUM'}

[ACTION] Schedule HR meeting for employee=12345, urgency=MEDIUM

In the [STEP] log, the step to be executed is described. THE [EXECUTE] The section maps this step to the specific function called, along with the arguments extracted from the description. Finally, [ACTION] reports the result of the function execution.

Project setup

The working project is available on GitHub. Since the architecture uses Models 1 through 3B, readily available off-the-shelf hardware is usually adequate. The system demonstrated surprisingly good performance even when tested on a laptop without a dedicated GPU, with the entire pipeline typically executing in 10 to 30 seconds. A note to note: typically, when we talk about architecture, the measurement of the number of tokens per second is always reported, but here, it is not a priority; we want this to be able to be run almost anywhere and, most importantly, locally. Of course, everything here can be run gracefully on a GPU.

Distribution of implementation

The system leverages intent detection to categorize reports (e.g., harassment or burnout), a planner to create step-by-step action plans, and an execution tool to perform actions such as opening HR cases or scheduling meetings.

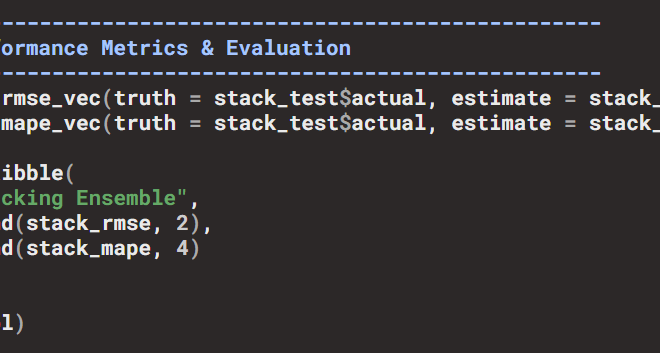

| Pipeline stage | Model Name | Typical size | Note |

|---|---|---|---|

| Intent detection | sentence transformers/ all-MiniLM-L6-v2 |

~90M | Classifies employee reports into predefined HR categories (for example, harassment, burnout, or policy violations). Uses the all-minilm template to generate embeddings for input text and predefined intent categories.

Calculates cosine similarity to determine the closest match intent. |

| Planner/Reasoning | Microsoft/ Phi-3-mini-4k-instruct |

~3.8 billion | Generates an ordered action plan to respond to the detected intent. Leverages the phi3:mini model to create structured JSON plans based on the employee report and detected intent.

Includes error handling for non-JSON output. |

| Running tools/actions | Google/ function-gemma-2b-it |

~2B | Executes planned actions using predefined HR tools. Relies on the functiongemma model for constrained function calls. |

| Agent Manager (Orchestra) | Classic code (Python) | n / A | Coordinates the entire pipeline (intent detection, planning, execution). Manages the flow of data through the pipeline and ensures that each step is executed in order. |

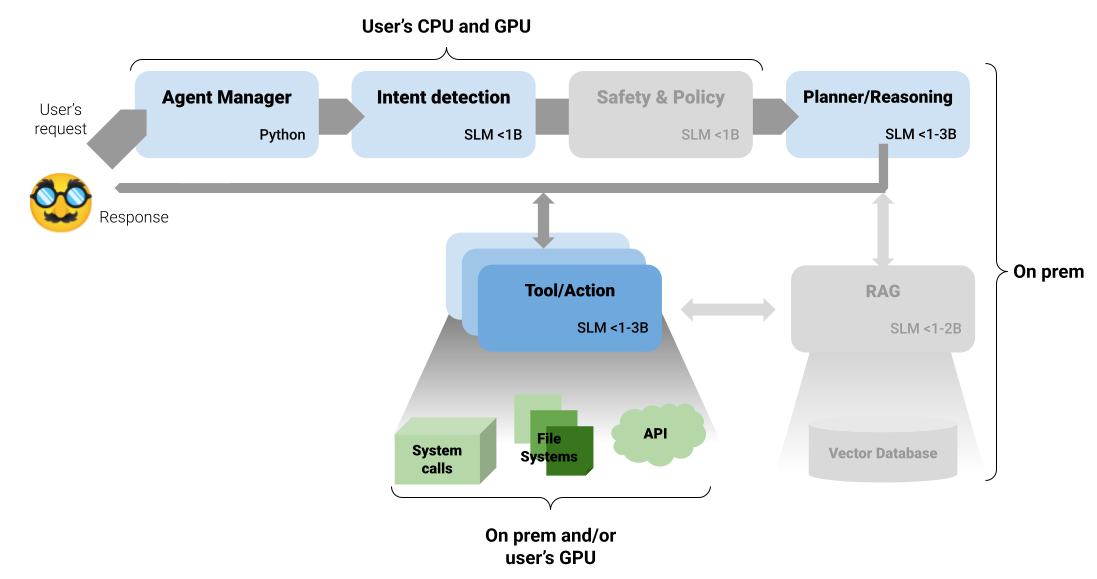

The following image shows the complete architecture. Components that were part of the general solution from the previous article are grayed out here:

Note: For simplicity, the current implementation does not include security and policy filtering, as the intended template (deberta-v3-small) is not available in a format compatible with Ollama. Another grayed out component is the RAG. We don’t have any additional information sources (e.g. documents, databases, etc.), so we don’t need to configure a RAG component here. You can compare this image with the more general version in the previous article.

Here is a brief description of all the source files you will find in the repository:

- main.py – The entry point to the system. It runs the complete end-to-end workflow using a sample employee report.

- agent.py – The orchestrator. It coordinates the detection, planning and execution of intentions

- intent_detector.py – Identifies the intent of the input string using MiniLM integrations, then maps it to one of the predefined intents

- planner.py – Produces an ordered action plan based on detected intent using the Phi-3 Mini model

- executor.py – Carry out the steps of the plan using constrained function calls via the Function Gemma framework

- hr_tools.py – Where the use case lies. In this example, it contains HR-specific help for employee reporting, such as opening and scheduling meetings. If you are adapting the repository for a different domain, this is usually the first file you will customize

- utils.py – A set of utilities, such as cleaning JSON responses. Since template formatting can be inconsistent, we use a cleanup step instead of relying on prompts to avoid common errors.

jsonpackaging

Conclusion

The Local First, Cloud Last architecture represents a practical shift in how AI systems can be designed for privacy-sensitive environments. Beyond theory, it shows that this approach is both robust and feasible today using existing open source models and modest local computing resources.

By assigning well-defined roles to small language models, such as classification, reasoning, and constrained retrieval, we ensure privacy by design while improving reliability, auditability, and cost-effectiveness. This contrasts with architectures focused on a single, large, remotely hosted LLM, which often introduce unnecessary costs, latency, and compliance risks for many enterprise workloads. Conversely, SLMs require rapid and more careful engineering. Unlike the flexibility offered by the LLM, SLMs are very sensitive to minor changes in the job description, requiring precise entry. However, this trade-off is balanced by the significant benefit of easy local testing, which eliminates the cost and time associated with paid tokens.

There is a growing perception that the current AI ecosystem, increasingly dominated by larger and larger LLMs, may be inflating a bubble that will eventually reshape the way language models are used. To some extent, this concern is justified. Large models are undeniably powerful, but they also require significant infrastructure, energy, and continuous monitoring to operate safely. Small language models offer a different tradeoff: they have a much smaller operational footprint, can run at the edge, on-premises, or even on mobile devices, and can be narrowly specialized for specific tasks. Rather than a fallback, SLMs represent a stable and durable foundation for many real-world systems, especially in environments where control, privacy, and predictability matter more than maximum expressiveness.

Berita Terkini

Berita Terbaru

Daftar Terbaru

News

Berita Terbaru

Flash News

RuangJP

Pemilu

Berita Terkini

Prediksi Bola

Togel Deposit Pulsa

Technology

Otomotif

Berita Terbaru

Daftar Judi Slot Online Terpercaya

Slot yang lagi gacor

Teknologi

Berita terkini

Berita Pemilu

Berita Teknologi

Hiburan

master Slote

Berita Terkini

Pendidikan

Resep

Jasa Backlink

One Piece Terbaru