5 principles for designing a contextual multimodal UX

UI/UX design has evolved with visual design that offers digital product interfaces for screens. However, modern multimodal UX design has proven productivity and security benefits related to designing products beyond the screen, using other modes of interaction such as voice, vision, sensing and haptics. Multimodal UX still primarily uses screen-based interaction in most products, but it doesn’t just focus on designing visuals for screens: it focuses on designing the interaction to fit the context by gradually disclosing the necessary UI elements. Multimodal UX is about creating contextual products that support multiple modes of human-centered communication beyond traditional input/output mechanisms.

Let’s understand how you can design accessible and productive multimodal products by adapting them to context, using strategies such as context awareness, progressive disclosure and fallback modes of communication.

Contextual Input/Output Systems

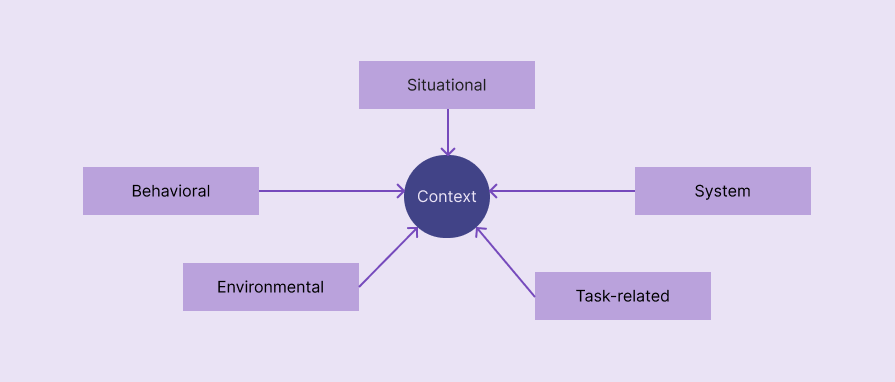

In a multimodal product, context refers to situational, behavioral, systemic, environmental, or task-related factors that determine the most appropriate mode of interaction. Multimodal products seamlessly change interaction modes based on context to improve overall UX.

The following factors define the fashion context of most multimodal products:

- Situational — A particular activity or situation that defines the user’s state. Driving, cooking and working out are common situations that require a change in mode

- Behavioral — How the user interacts with the system. Past interaction patterns and current behavior detected by the product define behavioral factors. For example, the user always uses voice mode for a specific user flow, so the product automatically enables voice mode for that particular flow.

- System— System parameters, states and capabilities affect the selection of the most appropriate interaction mode. For example, a very low battery level limits the use of the camera to activate vision mode.

- Environmental— Noise level, lighting and social environment in the user’s environment

- Task-related— The complexity of the current task, safety requirements, urgency, and input/output data types

Progressive modality

A good multimodal product never confuses users by simultaneously activating all available communication modes and never annoys them by asking them to explicitly define a mode, by presenting all modes; instead, it gradually activates on-demand communication modes. The integration of several modes of communication should not complicate the products.

Progressive disclosure of context-based communication modes is the right way to implement multimodal UX without increasing product complexity.

Redundancy without duplication

Multimodal UX is not about creating separate user flows for each mode of interaction. It’s about improving UX by cooperating with interaction modes and prioritizing them based on context. You must efficiently distribute input/output requirements between modes, using redundancy without duplication:

| Comparison factor | Redundancy in modes | Duplicating modes |

| Summary | Each mode of interaction presents the same main message or captures the same main contribution in different cooperative ways to improve the UX. | Separate and duplicated user flows for each interaction mode |

| Number of communication channels active at once | More than one | A |

| Implementation Effort | Higher | Lesser |

| Implementation in existing products | A redesign is usually necessary | Redesign is not necessary since modes create separate user flows |

| Improved accessibility | Accessibility is further improved through contextual mode prioritization and cooperation | Offers basic accessibility with switchable communication preferences |

You are not limited to selecting only one interaction mode at a time. Optimize I/O across different modes without unnecessary duplication. For example, Google Maps Driving Mode issues voice instructions only when necessary and also displays visual signs at all times.

Failover modes

Failover modes help users continue the current user flow and achieve their goals even if the current mode of interaction fails due to a system, permission, hardware, or environmental issue. The transition between primary (failing) mode and failover (alternate) mode should be seamless, preserving the current state of the task.

Here are some examples:

- Gesture-enabled music app enables touchscreen interaction mode in dimly lit environment

- Voice-activated AI assistant suggests using keyboard interaction in a very noisy environment

- A barcode scanner feature of an inventory management app fails due to missing camera permissions or a hardware issue, and then falls back to manually searching for products.

Expanding Accessibility

Implementing multimodal UX is not only a way to improve UX for general users, but also a practical way to improve usability for people with disabilities. When your product properly adheres to multimodal UX, it automatically increases the accessibility score. Multimodal UX shouldn’t be a separate mode of accessibility: it should blend into the overall UX of the product, prioritizing accessibility and helping everyone use your product productively.

Here are some best practices to maximize the overall accessibility score while adhering to multimodal UX:

- Implement multiple modes of communication, but don’t overload the modes; instead, prioritize one mode (or multiple modes) and enable it with fallback modes

- Consider system accessibility settings before changing interaction modes

- Share input/output details across prioritized communication channels with optimal consideration of multimodality and accessibility – use redundancy – not duplication

- Multimodal UX is not a separate accessibility design concept, so accommodate any general accessibility principles related to UI, like using clear typography, etc.

FAQs

Here are some common questions about contextual design in multimodal UX:

Should we use only one mode of communication at a time?

No, you can use multiple communication modes simultaneously, but make sure you avoid mode overload and that all active modes are synchronized, for example using gesture and voice commands in a personal assistant product.

Is the screen the main mode of interaction that initiates other modes?

Yes, for most digital products running on computers, tablets and phones, but some digital products running on special devices mainly use screenless interaction modes for initiation, adhering to Zero UI, for example saying “Hey Google” to the Google Home device.

The article 5 Principles for Designing Contextual Multimodal UX appeared first on the LogRocket blog.

PakarPBN

A Private Blog Network (PBN) is a collection of websites that are controlled by a single individual or organization and used primarily to build backlinks to a “money site” in order to influence its ranking in search engines such as Google. The core idea behind a PBN is based on the importance of backlinks in Google’s ranking algorithm. Since Google views backlinks as signals of authority and trust, some website owners attempt to artificially create these signals through a controlled network of sites.

In a typical PBN setup, the owner acquires expired or aged domains that already have existing authority, backlinks, and history. These domains are rebuilt with new content and hosted separately, often using different IP addresses, hosting providers, themes, and ownership details to make them appear unrelated. Within the content published on these sites, links are strategically placed that point to the main website the owner wants to rank higher. By doing this, the owner attempts to pass link equity (also known as “link juice”) from the PBN sites to the target website.

The purpose of a PBN is to give the impression that the target website is naturally earning links from multiple independent sources. If done effectively, this can temporarily improve keyword rankings, increase organic visibility, and drive more traffic from search results.